AutoML: 7 Tools to Get You Started with Data Science Automation

A survey of the best programming languages for Artificial Intelligence and Machine Learning.

Automated machine learning (AutoML) enables you to automate machine learning processes. There is a wide range of automation tools, created for different purposes. In general, machine learning tools are typically categorized into three categories of benefits—improving productivity, enforcing standardization, and promoting democratization.

This article explains what is AutoML, key advantages of AutoML, and what you can actually automate in machine learning. Including a review of seven top AutoML tools.

What Is Automated Machine Learning?

Automated machine learning (AutoML) is the use of automation, machine learning, and best practices to make ML accessible to a wider range of users. It is designed to help organizations speed up their training and use of ML models despite limited or a lack of access to ML experts, such as data scientists.

AutoML enables organizations to build and deploy models using templates, frameworks, and predefined processes. This speeds time to completion and helps ensure that models are as functional as possible.

Advantages of AutoML

There are three main advantages to incorporating AutoML. These are:

- Productivity—automation reduces the manual resources needed to monitor and perform repetitive ML tasks. This frees teams to focus on model refinement and packaging.

- Standardization—automated pipelines help reduce the chance of configuration errors and ensure that training and tests are performed uniformly.

- Democratization—AutoML lowers the barrier to entry for organizations with little to no ML expertise. This increases competitiveness and can increase innovation.

What Can We Automate in Machine Learning?

Although it might be nice to fully automate ML processes, that is not what AutoML does. Rather, it focuses on a few areas with high repetition. These areas include the following.

Hyperparameter optimization

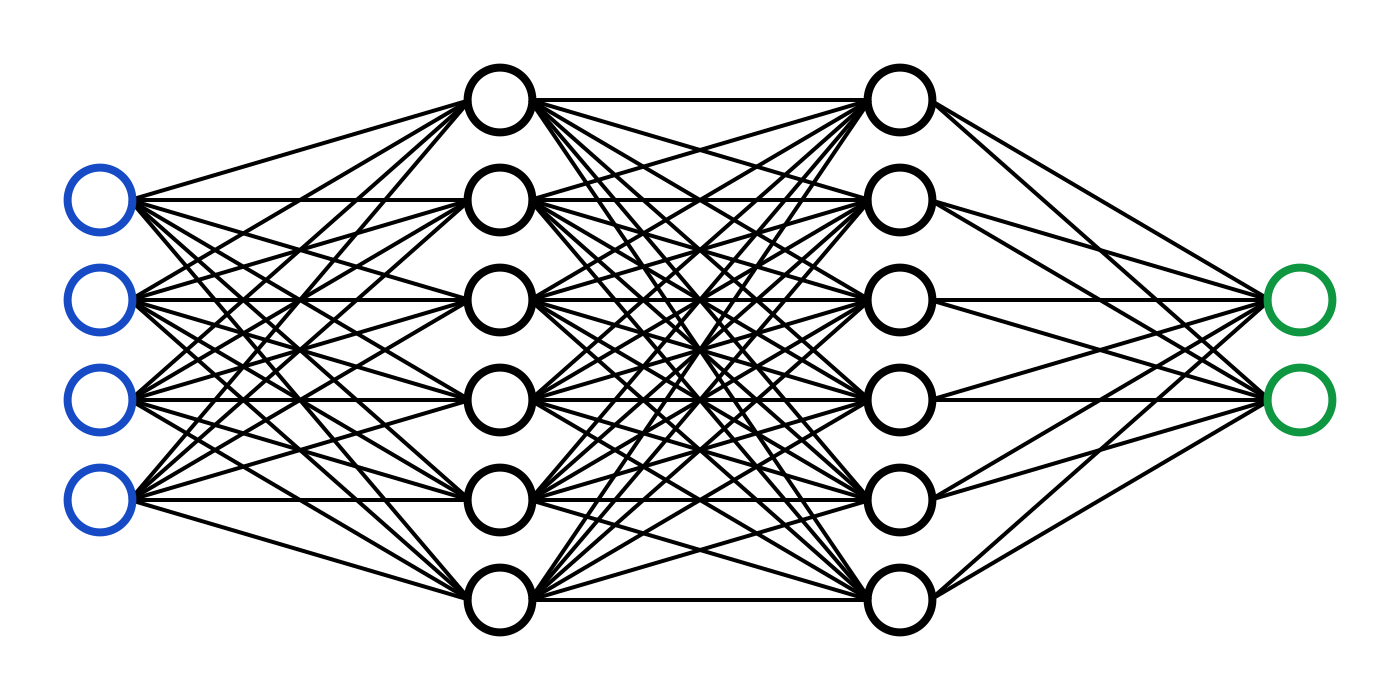

Hyperparameters are values that define how a model is trained and directly impact the accuracy of training. For example, the rate of learning, number of hidden units or layers, activations functions, or the number of epochs.

Hyperparameter optimization is a process of running your algorithm with different combinations of hyperparameters. This is done to determine which set produces the most accurate result. This process is performed with search algorithms, such as grid search, random search, or Bayesian methods.

Model selection

Model selection is a process of narrowing down candidate models for your training. It applies models to data to determine which model produces the optimum combination of performance, maintainability, and complexity. It is impacted by what resources are available to you and determines how your ML pipeline is structured.

Model selection is automated similarly to hyperparameter optimization, by running through multiple models and comparing the results. It often involves the same sort of search methods as hyperparameter optimization as well. However, it may also include the use of more extensive filters, including Bayesian Information Criterion (BIC) or Akaike Information Criterion (AIC).

Feature selection

Features are the data points used by your model to classify datasets. Feature selection involves defining how many features are going to be used, which features, and how defined those features are. The features you select directly impact the complexity of your model and its performance.

Feature selection is automated through the application of algorithms during testing. For example, by using wrapper, filter, or embedded algorithms. During testing, features and combinations of features are evaluated to determine how accurate classifications or predictions are based on those features.

What Is MLOps?

Machine learning operations (MLOps) is a DevOps strategy that uses machine learning models. The purpose of MLOps is to help standardize the development and deployment of machine learning models. This is accomplished through reliable documentation of processes and the creation of safeguards to ensure that processes are performed uniformly.

Some of the standard practices associated with MLOps include:

- Building on to APIs from existing AI services

- Using modular processes and components

- Developing models in parallel to reduce the impact of single model failures.

- Using pre-trained models as proofs of concept (PoCs)

- Building off of generalized algorithms to develop specific models

- Using publicly available data sources to bridge gaps in training data

7 Top AutoML Tools

If you’re ready to get started with AutoML, there is a growing number of tools available to you. Below are a few to consider using.

1. Run:AI

Run:AI is a proprietary platform for automating machine learning infrastructure. In terms of AutoML the platform offers controls for automating resource management, as well as workload orchestration for your entire machine learning infrastructure.

You can use Run:AI to pool GPU compute resources, set up GPU quotas, and continuously change resource allocation. These features enable you to actually optimize your compute resources, and ensure even highly intensive deep learning models consume resources at scale.

2. AutoKeras

AutoKeras is an open source library based on Keras that you can use for classification and regression of images, text, and structured data. It enables you to use pre-built blocks to construct a model, leaving you to focus on high-level architecture. AutoKeras supports use with Python 3.5 and up and TensorFlow 2.1.0 and up.

3. Auto-WEKA

Auto-WEKA is an open source library that you can use to optimize your hyperparameter selection. It uses Bayesian optimization to select a learning algorithm and hyperparameters from those available in the WEKA package. Auto-WEKA has the same requirements as WEKA and includes a graphical user interface (GUI) for ease of use.

4. DataRobot Automated Machine Learning

DataRobot is a proprietary platform that you can use to automate and optimize model creation. It is designed for end-to-end support of model development, training, and deployment.

DataRobot provides a range of features, including for data formatting, feature engineering, model selection, hyperparameter tuning, and monitoring. It also offers pretrained models, a data catalog, and a user friendly GUI with visualizations of the entire training and deployment process.

5. H20 AutoML

H2O is an open source platform for ML that is distributed and runs in-memory. It supports a wide range of ML and statistical algorithms, including deep learning, generalized linear models, and gradient boosted machines. While H2O is not automated, it includes a paid add-on, called H2O AutoML.

H20 AutoML enables you to train and tune models with automatic feature selection and extraction, hyperparameter optimization, and use of ensembles (multiple models for greater performance). You can use H20 AutoML from a web GUI. It integrates with Hadoop, Spark, and Kubernetes.

6. MLBox

MLBox is an open source Python library that you can use to automate many aspects of model training. These aspects include data preprocessing, feature selection, and hyperparameter optimization. It also includes predictive models for regression and classification, such as LightGBM, Stacking, and Deep Learning.

7. auto-sklearn

auto-sklearn is an open source toolkit based on scikit-learn that you can use to perform model selection, feature engineering, and hyperparameter tuning. It includes features that enable you to leverage Bayesian optimization, ensembles, and meta-learning for more accurate models and training.

When using auto-sklearn, you can restrict the time and memory limits of scikit-learn, restrict your searchspace, and control preprocessing. You also have the ability to inspect training statistics and results and perform parallel computations.

Conclusion

AutoML tools enable you to automate machine learning tasks, including hyperparameter optimization, model selection, and feature selection. When choosing an AutoML tool, you should first assess your project, identify areas that need improvement, and then select the tools that offer a solution that fits your needs. You should also check integration options, to ensure that the AutoML tool of your choice is compatible with your existing infrastructure.